Introducing Lens Agents: The governed platform for running AI agents on enterprise systems

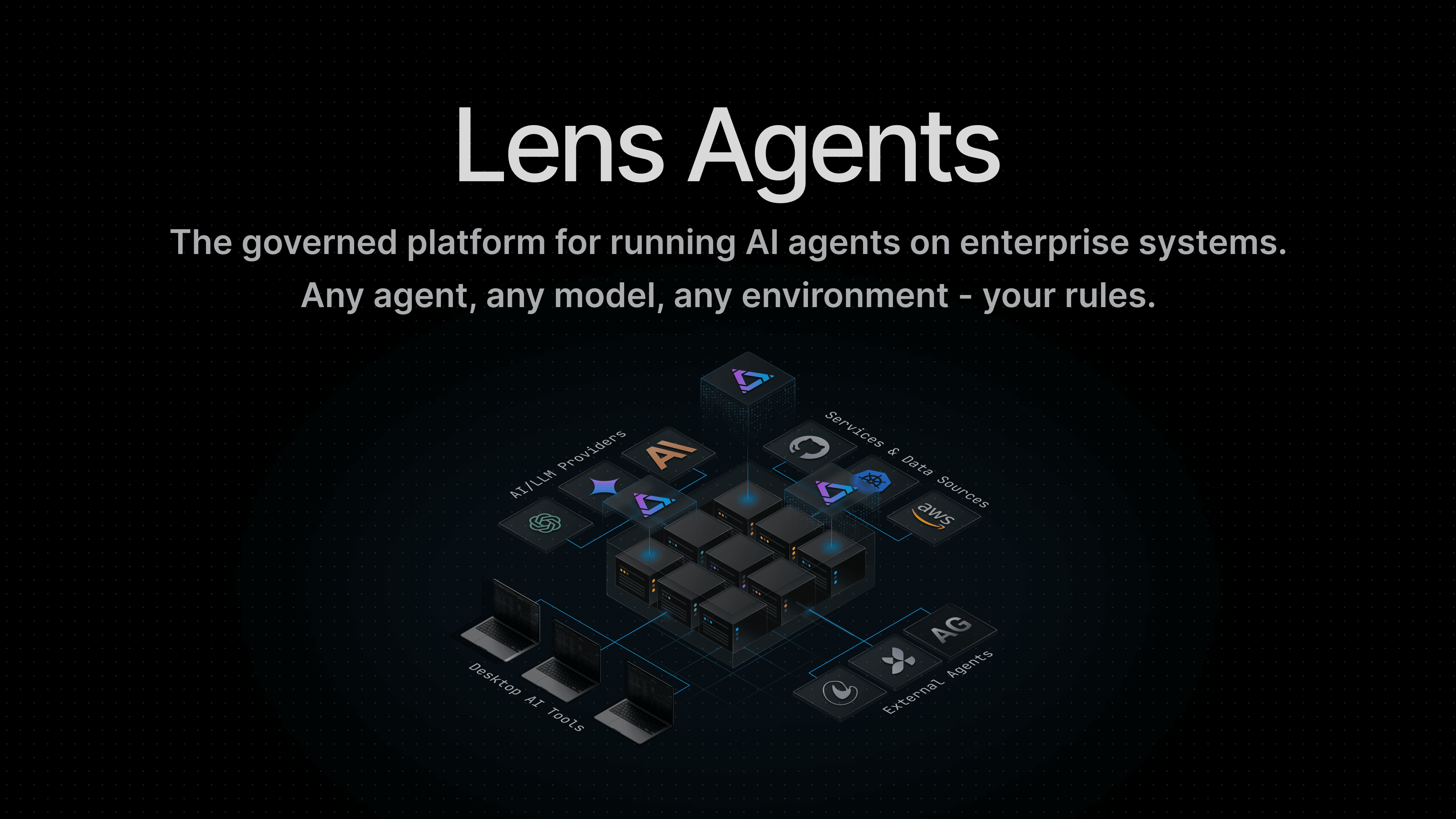

Today we’re introducing Lens Agents, the governed platform for running AI agents on enterprise systems: any agent, any model, any environment, your rules.

AI agents are already inside the enterprise. They are reading tickets, querying production systems, reviewing code, checking cloud infrastructure, and pulling answers from internal tools. They are showing up across engineering, support, IT, operations, and business teams, running on laptops, in clouds, and inside internal environments.

That shift is not coming. It is here.

The real question is not whether enterprises will use AI agents. The real question is whether those agents will run governed or ungoverned.

That is the category Lens Agents was built for.

Lens Agents is the governed platform for running AI agents on enterprise systems. Any agent. Any model. Any environment. Your rules.

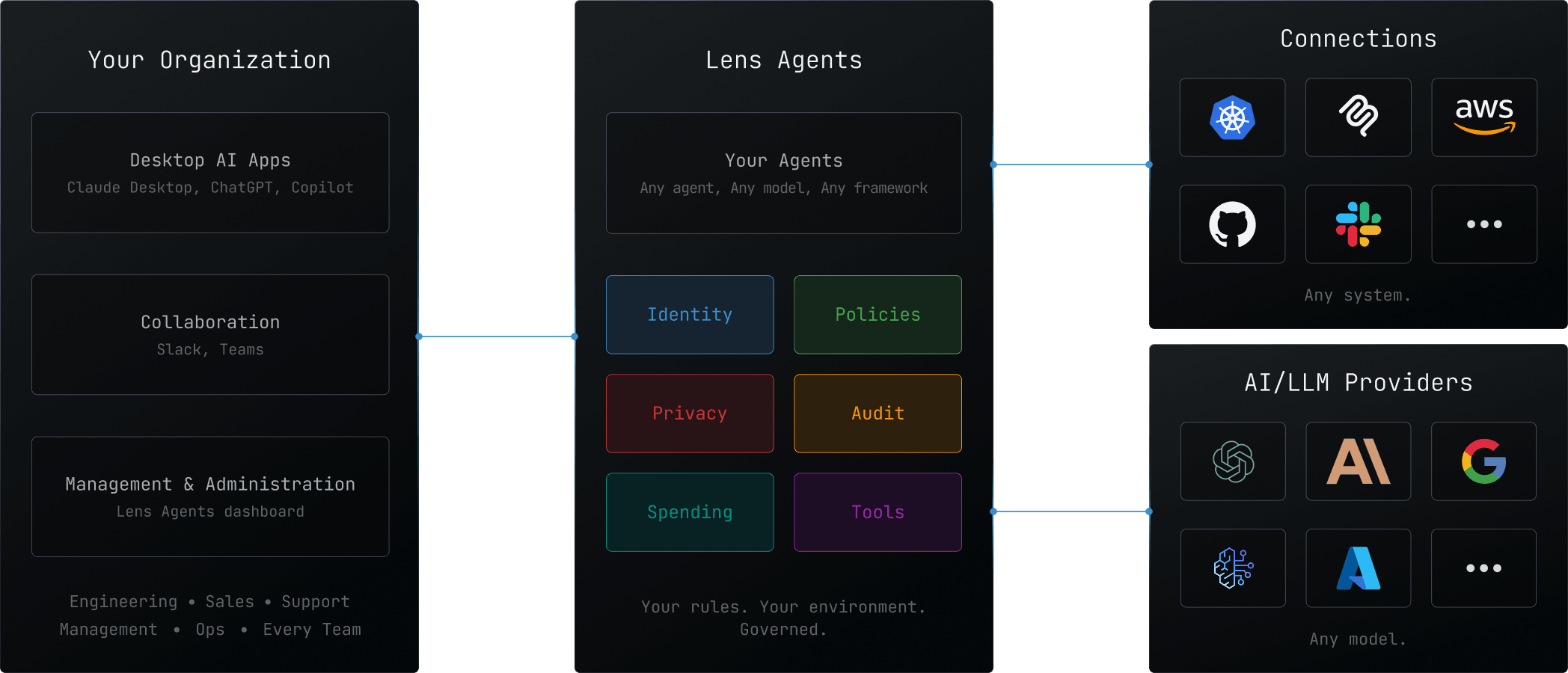

This will become a foundational layer of the modern enterprise stack. Every organization will need a way to let agents do real work on real systems without handing over personal credentials, opening uncontrolled paths into internal systems, or losing the audit trail. Lens Agents gives every agent what it needs to do that work: tools to take action, connectivity to the systems where business context lives, and governance built in from the start.

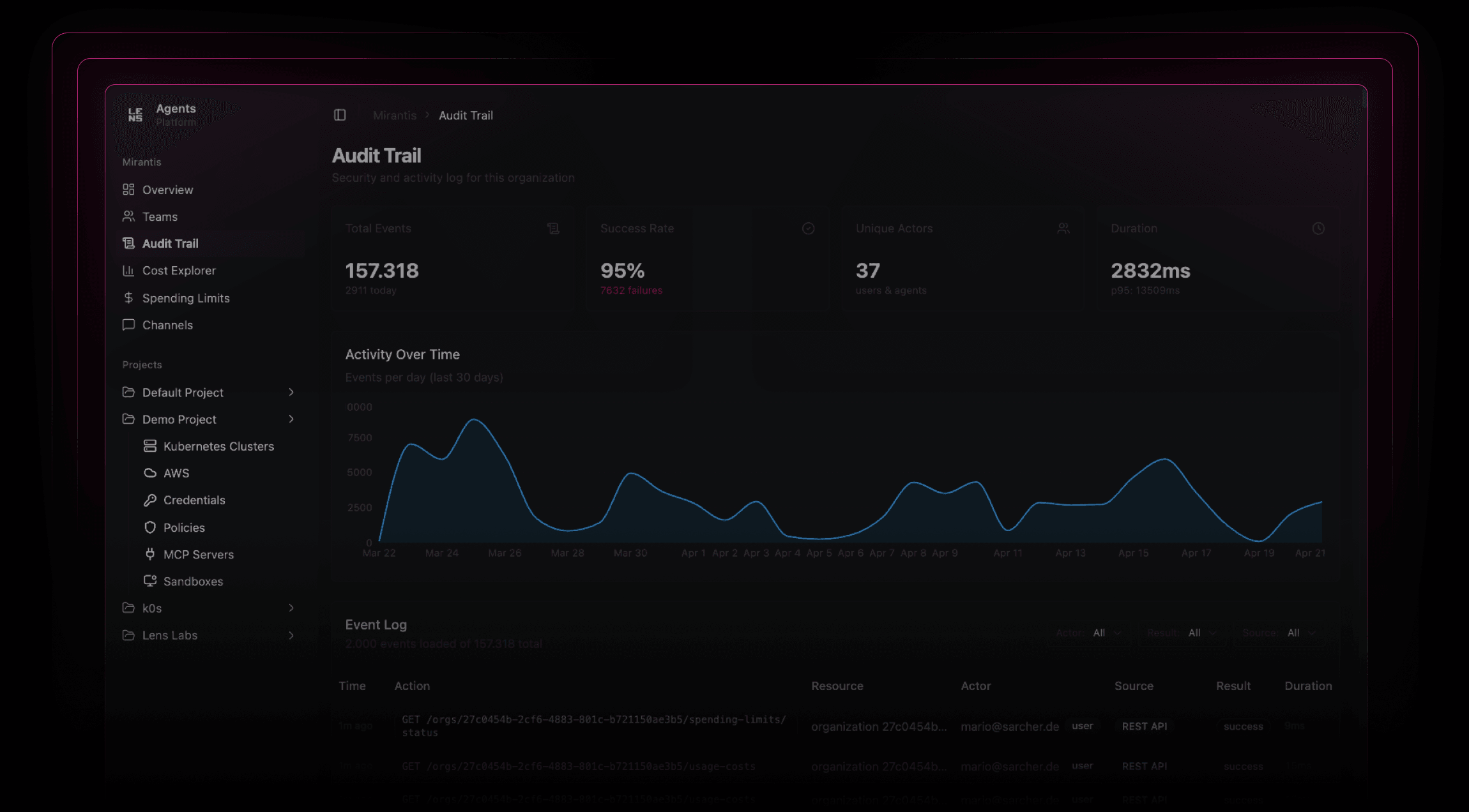

Lens Agents is available now for enterprises that want to move from experiments to production with identity, policy-controlled access, privacy controls, and a full audit trail.

AI agents need more than a model and a prompt

An agent that does meaningful work needs more than intelligence. It needs a governed place to run, tools to take action, connectivity to real systems, identity, boundaries, and an audit trail.

In the enterprise, that runtime cannot rely on personal credentials, have open-ended access to enterprise systems, or move sensitive data without controls.

Without governance, AI agents become another source of sprawl: hard to see, hard to control, and risky to trust with production systems, customer data, and internal operations.

That is not a side problem. It is the central infrastructure problem of enterprise AI adoption.

Lens Agents gives enterprises a better path: teams can adopt AI agents across the organization while IT keeps governance, visibility, and policy control from day one.

That matters even more as agent usage spreads across modes. A Claude Code session on a laptop, an external agent running in your cloud, and a managed agent on Lens Agents should not require different governance models. They should be governed by the same platform, the same policies, and the same audit trail. Lens Agents applies one governance plane across them all.

What Lens Agents does

Lens Agents gives every agent the two things it needs to do real work:

- Tools to fetch data, take actions, and manipulate information

- Connectivity to the systems where business context lives

Those capabilities are governed by design.

From there, the platform follows three clear principles:

- Connectivity so agents can work on real enterprise systems

- Governance so IT sees everything and controls everything

- Flexibility so teams can use their preferred agents, models, and environments

With Lens Agents, organizations can run all three kinds of agents:

- Desktop AI tools like Claude Desktop, ChatGPT, Copilot, Cursor, Claude Code, and Codex

- External agents built with any framework and running anywhere

- Managed agents created directly on Lens Agents

Whether the agent starts on a desktop, in another runtime, or directly on Lens Agents, the governance model stays the same.

That means:

- Every agent has its own identity

- Access to systems is policy-controlled

- Execution is sandboxed

- Credentials are isolated from the agent

- Sensitive data can be filtered, masked, or blocked

- Every action is captured in a full audit trail

- Spending can be tracked and controlled

The result is simple: teams can use AI agents against real enterprise systems, while IT still sees everything, controls everything, and enforces the rules.

Under the hood, that governance is enforced in the runtime itself. Lens Agents uses kernel-enforced sandboxing, default-deny networking, and server-side credential injection so credentials never need to enter the agent process. This is not a platform that watches and reports after the fact. It enforces the boundary before the action happens.

Built for how enterprises actually use AI

Most AI agent platforms start from the assumption that agents live in one cloud, use one model stack, and follow one preferred framework.

That is not how most enterprises operate.

Real organizations have agents running on laptops and in the cloud. They use multiple model providers. They depend on Kubernetes, AWS, GitHub, SaaS applications, internal APIs, and on-prem systems that all matter to the workflow. They need one platform that can govern agents consistently across all of it.

That is exactly what Lens Agents is built for.

With your rules:

- Any environment — desktop, public clouds, or on-premises

- Any model — Claude, GPT, Gemini, Llama, or self-hosted models

- Any agent — managed agents, external agents, desktop AI tools, and agents built with any MCP-compatible framework

- Any connections you approve — Kubernetes, AWS, GitHub, CRM, ticketing, internal APIs, and other enterprise systems through policy-controlled access

Your teams can use the agents they want. Your organization keeps the rules consistent.

What enterprise readiness means in practice

Enterprises do not need another AI demo. They need a governed platform they can roll out with confidence.

Lens Agents is designed for that bar.

- Governed access with domain-level and HTTP-level policy controls

- Agent identity separate from user credentials

- Sandboxed execution for safer operation

- Credential isolation through server-side injection

- Audit trails across tool calls, API requests, shell commands, and model usage

- Spending controls at the org, team, and agent level

- Enterprise administration with multi-tenant organization structure, SSO, and SCIM

- Deployment flexibility for enterprise customers, including self-hosted options

- Trust foundation backed by SOC 2 Type 1 and ISO 27001

This is the standard AI agent platforms need to meet before enterprises can trust them with meaningful work. It also includes privacy and compliance posture from the start. Lens Agents includes policy-driven controls for sensitive data and PII, and it is built to support the auditability, oversight, and governance foundations enterprises need as regulations like the EU AI Act take effect.

Built because we needed it

Lens Agents comes from the team behind Lens Kubernetes IDE (a.k.a. Lens K8S IDE), used by 1M+ users.

That matters because we did not arrive at this problem as observers. We hit it ourselves.

As we moved to an AI-native operating model internally, we needed our agents to operate on real systems: Kubernetes clusters, cloud accounts, codebases, internal APIs, and operational workflows. That meant assuming the worst from the start. If an agent is going to run kubectl, execute shell commands, build its own tools, install packages, or automate work against production-adjacent systems, you cannot afford to treat it like a harmless assistant.

You have to assume something will eventually go wrong. A command that looks innocent can pull in a compromised dependency. A tool can reach farther than intended. A host machine can become the weak point. For a company trusted by major technology organizations around the world, that is not an acceptable risk model.

So we built the missing stack we needed ourselves: governed sandboxes, policy-controlled access, credential isolation, audit trails, and the controls to let agents do real work without trusting them by default.

What started as infrastructure we needed for our own AI-native operations became Lens Agents.

Managed agents on Lens Agents are not fixed-function bots.

An SRE agent, a data analyst, a support specialist, or a role unique to your business all come from the same core model, shaped by the agent’s behavior, connections, tools, and autonomy level. You decide what systems it can access, what tools it can use, how it should behave, and how independently it can act.

Learn more

To see how Lens Agents works, visit the Lens Agents product page or go deeper in the documentation. If your team is evaluating how to bring AI agents into production with governance built in, talk to us.

Lens Agents is here for enterprises now. Self-service is coming soon.

AI agents will become part of how every enterprise operates. The leaders in this shift will not be the companies that adopt agents last. They will be the ones that put the right governed platform in place first.

Any agent. Any model. Any environment. Your rules.